Diversification of Data Center Applications

The growth in the Data Centre market is well underway. In our last blog we identified some of the key drivers for this such as Data Center Migration and the virtualizaton of 5G networks (VNF) and how this diversification of applications, combined with 100G speed requirements, is putting pressure on Data Center Operators and Cloud Service Providers for performance verification.

Often performance is looked at as a post deployment issue to rectify, but for those responsible for initiating deployments having foresight into what issues may occur can enable risk mitigation on any Quality of Service (QoS) problems up front, before applications go near the live network.

Understanding Network Behaviour

The key to this is understanding network behaviour. Every network and application will have its own unique characteristics and so network impairments will impact the performance of each application differently. For engineers the lack of insight into how each one performs under differing network conditions, prior to running them on the live network, leaves big questions which are hard to answer. With only guess work as a solution, this can create severe risk to both the customer and the operator or service provider.

In the use cases below we identify some of the verification challenges engineers are facing and how getting early insights into performance is enabling application verification pre deployment.

Data Center Migration

For enterprise organisations seeking to migrate their Data Center operations one of the biggest challenges faced is evaluating the risks between the economic advantage of relocation versus impact to mission critical applications. To address this they will often seek operators to supply a PoC (Proof of Concept) looking at “what if” scenarios and available solutions to address potential problems prior to a migration.

In response, operators and service providers are emulating customer networks to investigate the impact that impairments such as latency and packet loss could have on performance. By demonstrating to customers how real life unpredictable issues (often caused by the subtle and complex interactions between networks, servers and applications) can be addressed, they can provide performance verification prior to migration, proving how risks would be mitigated and demonstrating how SLAs will be met once live.

Data Center Interconnect (DCI)

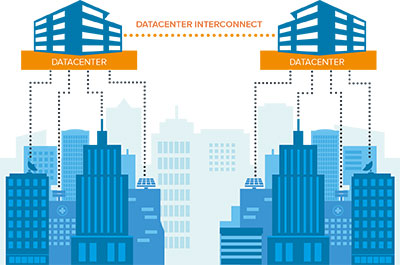

A different Data Center use case where 100G performance verification is vital is Data Center Interconnect. In this case not all applications are hosted locally to the user but instead are hosted at a remote data center location but with connectivity between it and the local data center.

As the diagram shows, this set-up means requests for services can require access east to west as well as north to south which can cause an increase of latency on the network and significantly affect the performance for the end user.

By emulating the exact network set-up and then adding latency and packet loss, it becomes possible to identify performance boundaries for specific applications before deployment. This then highlights where the implementation of load balancing and WAN optimisation technologies would be beneficial to improve the overall QoS.

With these data centre examples we have highlighted how pre deployment testing is removing the guess work and providing the insights needed to make decisions and changes to a network in order to mitigate performance risks.

Look out for our next blog in this Data Center series where we will be looking at the specific impairment challenges facing telecoms operators who are virtualizing their 5G networks and moving their 5G network core to public Data Centers using VNF.

Related product: Calnex SNE

Related blog: Diverse Applications, 100G and the Data Center Verification Challenge